As noted by Arkes et al, 7 physicians are well equipped with a body of knowledge to make independent and informed decisions.

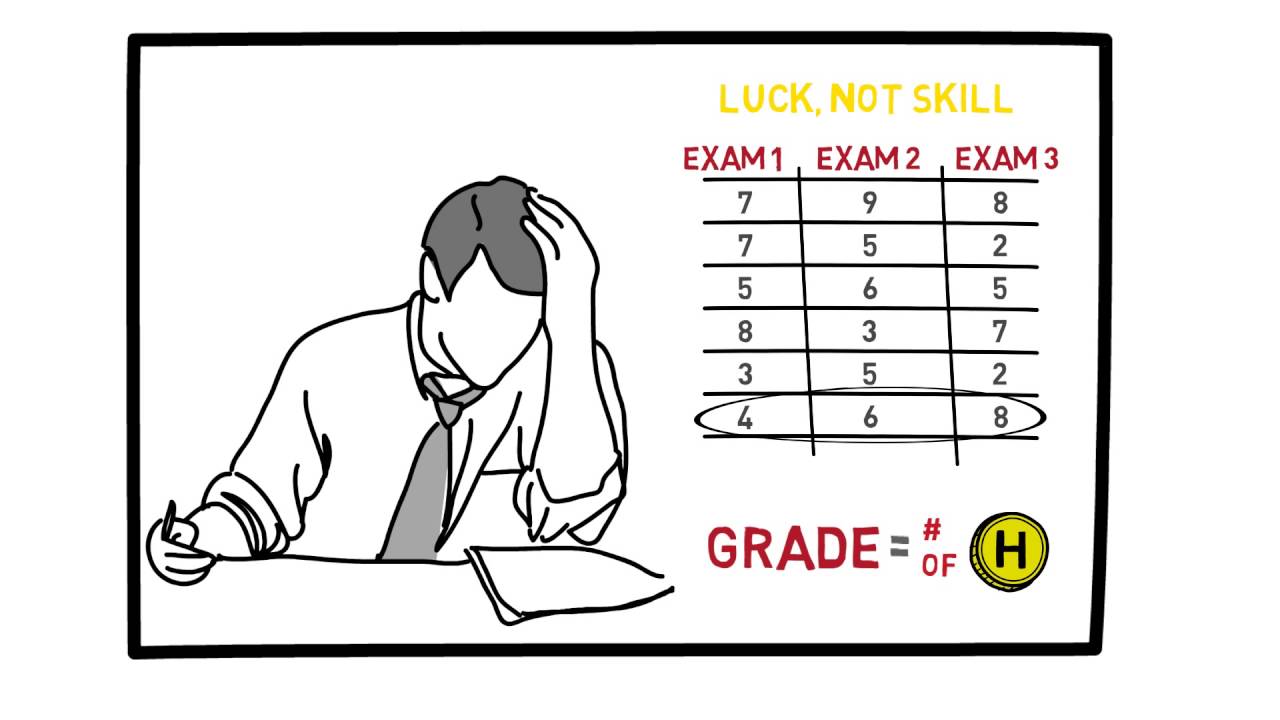

Not all the diagnoses were equally plausible as shown by the higher probabilities given by the foresight group to Reiter’s syndrome (44%) and gout (29%). When compared with the foresight group, these two hindsight groups were 2–3 times more likely to make a diagnosis in accordance with the outcome knowledge expressed in the first sentence. The physicians exhibited evidence of hindsight bias, but the bias was confined to the two diagnoses (post-streptococcal arthritis and hepatitis) that were assigned the lowest probability estimates by the foresight group. As the instructions requested, all gave their independent diagnosis of the patient. The remaining four groups were hindsight groups, each of which received the same information except for a different first sentence as follows: (1) this is a case history of Reiter’s syndrome (2) this is a case history of post-streptococcal arthritis in an adult, (3) this is a case history of gout, and (4) this is a case history of hepatitis. The first group, called the foresight group, was given the case history and simply asked to assign probability estimates to each of the four possible diagnoses. This task was given to 75 physicians divided into five groups. Your task is to assign probabilities to each of four given diagnoses so that the probabilities sum up to 100%. You also receive laboratory test results, but not all of them are back. A few days later similar symptoms develop in his left wrist and right knee. 7 Imagine as a practising physician that you are given a case history to read of a 37 year old bartender who developed increasing pain in his left knee which had become hot and swollen. The following case history is representative of the experimental procedure. 9– 11 Robust reports of it come from a variety of domains including medical diagnoses, legal rulings, financial forecasts, election returns, business outcomes, sporting events, and military campaigns. Progressively working backwards through a causal framework with a known outcome runs the risk of delusional clarity and important lessons unlearned.Ĭonvincing demonstrations of hindsight bias are well established experimentally 4– 8 and the phenomenon has been the subject of extensive reviews. Dekker 3 aptly reminds us that the point of investigations of human error is not to find where people went wrong, but to understand why their assessments and actions made sense at the time.

If investigations of adverse events are to be fair and yield new knowledge, greater focus and sensitivity needs to be given to recreating the muddled web of precursory and proximal circumstances that existed for personnel at the sharp end before the mishap occurred. Such hindsight results in expectations by investigators that participants should have anticipated the mishap by foresight.

“Why couldn’t they see it?” is the question that is often asked. In fact, it may be difficult to imagine it happening any other way. Given the advantage of a known outcome, what would have been a bewildering array of non-convergent events becomes assimilated into a coherent causal framework for making sense out of what happened. While most people would not expect much credit for picking a horse after it has won the race, many investigators are unaware of the influence of outcome knowledge on their perceptions and reconstructions of the event. Investigators have the luxury in hindsight of knowing how things are going to turn out nurses, physicians, and technicians at the sharp end do not. As noted by Reason, 2 the most significant psychological difference between individuals who were involved in events leading up to a mishap and those who are called upon to investigate it after it has occurred is knowledge of the outcome.

Proclamations about medical error are almost always made after the event, rarely before.